In 2025, AI-generated content is as common as bees in a garden. You can find it in anything from ads to amazing digital art. But there is a growing feeling of “AI regret” behind all of these amazing things. People are getting rid of things they made that they liked because they wish they hadn’t pushed “generate” so quickly. It’s not easy to acknowledge AI’s power and deal with its challenges at the same time, as this new trend reveals.

Every day, billions of words and millions of AI images are created, but people are exceedingly careful with them once they are made. People used to be enthused about how AI could help things go faster and work better. But now, most people aren’t sure how good, moral, and trustworthy it is. Instant content can be useful, but it can also go wrong. This means that writers need to swiftly delete or amend things that don’t meet their standards, seem biased, or could affect their reputations.

In sensitive areas like healthcare, where AI-generated material that was rushed to print has caused mistakes—sometimes without the client’s permission—this friction is most clear. This has led to rapid removals and redactions. These instances indicate that AI is still only a tool that needs cautious human judgment, especially when lives or reputations are at stake. Some people are learning the hard way that AI’s output needs a lot of human management to keep it from injuring people or spreading incorrect information.

Because of new ways to find AI and changing rules on platforms, producers are also thinking about the content they develop with AI. Companies that employ AI to boost their SEO or digital marketing are now in a risky position. They need to find a way to be both useful and legal, as Google and other sites are making it harder to use AI that hasn’t been verified. By now, everyone should know how to use AI in a safe and open way.

“AI regret” doesn’t mean we should stop utilizing generative AI, even though these problems are real. To put it another way, it means that the technology is getting better. This makes people think of new ways to stay safe, which elevates the requirements for quality control, ethics, and openness. By merging AI technologies with actual people to oversee and add creativity, content makers may make their operations far more efficient. This manner, they don’t put themselves in danger for no reason.

It’s still incredibly crucial to trust. Because only about 14% of customers currently fully trust material made by AI, it’s vital to deal with issues like bias, privacy, and responsibility. Businesses are recruiting more and more AI ethics specialists and developing regulations to help people trust AI again and use it in a responsible way.

It will be very useful in the future to improve the relationship between people and AI. People tend to have more regrets as they become older. Instead of merely saying no, we need to work toward greater, more moral AI inclusion. People who are willing to learn these lessons and follow moral rules can help develop digital content ecosystems that are more valuable and trustworthy. These ecosystems will be honest and help new ideas grow.

—

**What You Should Know About Deleting Content and AI Regret:

– AI content generation is going swiftly, but people need to review it carefully to make sure it is correct and respects moral norms.

– When AI-generated goods don’t meet trust, quality, or ethical standards, users send them back.

– More and more, producers are afraid to use AI content because of rules on platforms and technologies that can find it.

– A lot of people don’t believe what AI says, which illustrates how crucial it is to be honest, not be biased, and keep data safe.

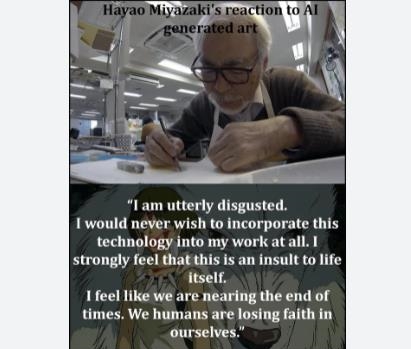

– Experts warn that AI’s speed should be balanced out by human creativity and moral oversight to avoid pitfalls.

– AI grief means that the tech ecosystem is becoming older and wants to work better together instead of giving up.

People and businesses can get the most out of AI’s ability to make the world a better place by dealing with AI regret head-on. This will make technology smarter and more reliable in the future.